Object Detection Tutorial#

Run on Google Colab Run on Google Colab

|

View source on GitHub View source on GitHub

|

Download notebook Download notebook

|

Start EVA server#

We are reusing the start server notebook for launching the EVA server.

!wget -nc "https://raw.githubusercontent.com/georgia-tech-db/eva/master/tutorials/00-start-eva-server.ipynb"

%run 00-start-eva-server.ipynb

cursor = connect_to_server()

File ‘00-start-eva-server.ipynb’ already there; not retrieving.

nohup eva_server > eva.log 2>&1 &

Note: you may need to restart the kernel to use updated packages.

Download the Videos#

# Getting the video files

!wget -nc https://www.dropbox.com/s/k00wge9exwkfxz6/ua_detrac.mp4

# Getting the Yolo object detector

!wget -nc https://raw.githubusercontent.com/georgia-tech-db/eva/master/eva/udfs/yolo_object_detector.py

File ‘ua_detrac.mp4’ already there; not retrieving.

File ‘yolo_object_detector.py’ already there; not retrieving.

Load the surveillance videos for analysis#

We use regular expression to load all the videos into the table#

cursor.execute('DROP TABLE ObjectDetectionVideos')

response = cursor.fetch_all()

print(response)

cursor.execute('LOAD VIDEO "*.mp4" INTO ObjectDetectionVideos;')

response = cursor.fetch_all()

print(response)

@status: ResponseStatus.SUCCESS

@batch:

0

0 Table Successfully dropped: ObjectDetectionVideos

@query_time: 0.05684273294173181

@status: ResponseStatus.SUCCESS

@batch:

0

0 Number of loaded VIDEO: 10

@query_time: 0.23361483099870384

Visualize Video#

from IPython.display import Video

Video("ua_detrac.mp4", embed=True)

Register YOLO Object Detector an an User-Defined Function (UDF) in EVA#

cursor.execute("""CREATE UDF IF NOT EXISTS YoloV5

INPUT (frame NDARRAY UINT8(3, ANYDIM, ANYDIM))

OUTPUT (labels NDARRAY STR(ANYDIM), bboxes NDARRAY FLOAT32(ANYDIM, 4),

scores NDARRAY FLOAT32(ANYDIM))

TYPE Classification

IMPL 'yolo_object_detector.py';

""")

response = cursor.fetch_all()

print(response)

@status: ResponseStatus.SUCCESS

@batch:

0

0 UDF YoloV5 already exists, nothing added.

@query_time: 0.01149210100993514

Run Object Detector on the video#

cursor.execute("""SELECT id, YoloV5(data)

FROM ObjectDetectionVideos

WHERE id < 20""")

response = cursor.fetch_all()

print(response)

@status: ResponseStatus.SUCCESS

@batch:

objectdetectionvideos.id \

0 0

1 1

2 2

3 3

4 4

.. ...

185 15

186 16

187 17

188 18

189 19

yolov5.labels \

0 [car, car, car, car, truck, car, car, cell phone, car, car, truck, car, cell phone]

1 [car, car, car, car, truck, cell phone, car, cell phone, car, car, truck, bus, car, car, bus]

2 [cell phone, car, cell phone, truck, car, car, car, truck, truck]

3 [cell phone, car, traffic light, car, truck, cell phone, car, truck, truck, truck, car]

4 [car, truck, cell phone, car, traffic light, car, truck, car, car, truck, car, car, bus, truck]

.. ...

185 [car, car, car, car, car, car, car, person, car, car, car, motorcycle, car, car, car, motorcycle...

186 [car, car, car, car, car, car, person, car, car, car, car, motorcycle, motorcycle, truck, car, c...

187 [car, car, car, car, car, car, car, person, car, car, car, car, car, truck, motorcycle, motorcyc...

188 [car, car, car, car, car, car, car, car, car, person, car, car, car, bus, truck, motorcycle, car...

189 [car, car, car, car, car, car, car, car, car, person, car, car, car, car, truck, bus, car, car, ...

yolov5.bboxes \

0 0 [613.0485229492188, 216.29083251953125, 718.1734619140625, 275.6402282714844]

1 ...

1 0 [614.474365234375, 217.62545776367188, 721.433837890625, 278.5963134765625]

1 [...

2 0 [150.92086791992188, 468.4427490234375, 279.14739990234375, 535.2306518554688]

1 ...

3 0 [153.17645263671875, 463.0412292480469, 280.0609436035156, 534.4542846679688]

1 ...

4 0 [847.2015380859375, 289.1953430175781, 959.904052734375, 356.5442810058594]

1 [...

.. ...

185 0 [641.4051513671875, 224.3956298828125, 757.6414794921875, 290.0587158203125]

1 ...

186 0 [644.232177734375, 225.87051391601562, 761.1083984375, 290.7424011230469]

1 ...

187 0 [646.2791137695312, 225.9928436279297, 763.2777709960938, 291.8134765625]

1 ...

188 0 [647.1568603515625, 226.51370239257812, 765.8187866210938, 292.62884521484375]

1 ...

189 0 [648.61767578125, 227.11984252929688, 768.1045532226562, 293.3794250488281]

1 ...

yolov5.scores

0 [0.7243762016296387, 0.6949567794799805, 0.507372260093689, 0.48990708589553833, 0.4015621244907...

1 [0.700208842754364, 0.6787835955619812, 0.5990053415298462, 0.5681858062744141, 0.51617020368576...

2 [0.7441924810409546, 0.6931878924369812, 0.6251559853553772, 0.5646522641181946, 0.5072109699249...

3 [0.7614237666130066, 0.7540610432624817, 0.651155412197113, 0.5523987412452698, 0.48905572295188...

4 [0.7324366569519043, 0.5914739966392517, 0.5453053116798401, 0.5058165192604065, 0.5009402036666...

.. ...

185 [0.8875618577003479, 0.8631888031959534, 0.8474202752113342, 0.8387584686279297, 0.8247876167297...

186 [0.8969655632972717, 0.8630247712135315, 0.8239787817001343, 0.8124074339866638, 0.8089078664779...

187 [0.8984967470169067, 0.8612020015716553, 0.8340322375297546, 0.8170915842056274, 0.8132005929946...

188 [0.8983428478240967, 0.860016942024231, 0.8409522175788879, 0.8305366635322571, 0.81345069408416...

189 [0.8998422622680664, 0.8530005216598511, 0.8488077521324158, 0.835029125213623, 0.81902801990509...

[190 rows x 4 columns]

@query_time: 12.921193392947316

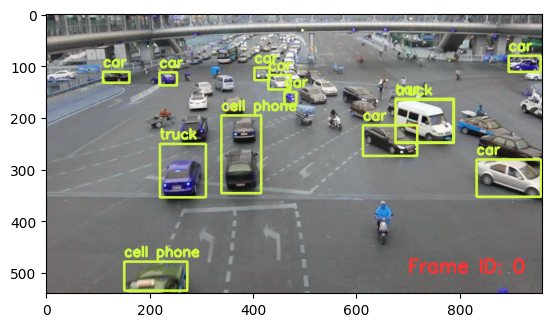

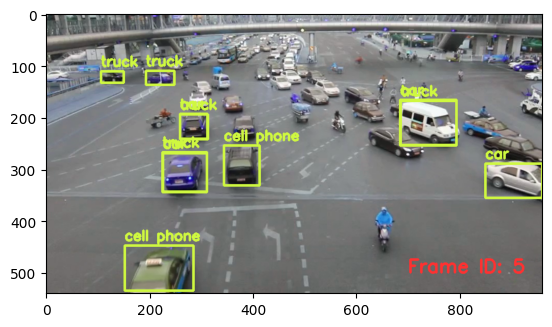

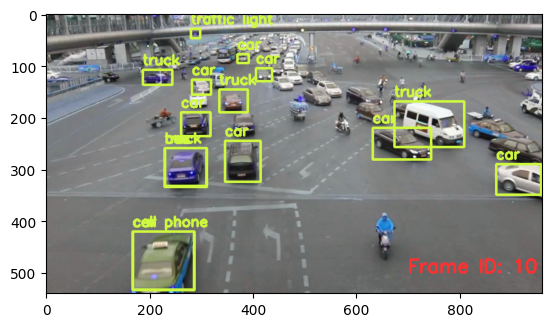

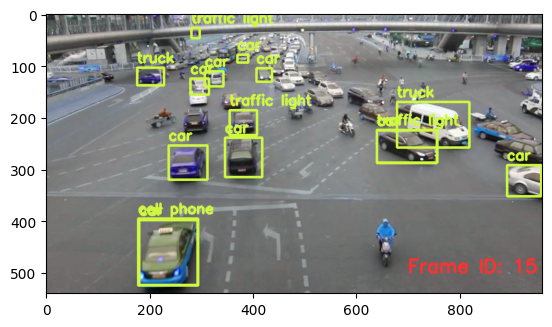

Visualizing output of the Object Detector on the video#

import cv2

from pprint import pprint

from matplotlib import pyplot as plt

def annotate_video(detections, input_video_path, output_video_path):

color1=(207, 248, 64)

color2=(255, 49, 49)

thickness=4

vcap = cv2.VideoCapture(input_video_path)

width = int(vcap.get(3))

height = int(vcap.get(4))

fps = vcap.get(5)

fourcc = cv2.VideoWriter_fourcc('m', 'p', '4', 'v') #codec

video=cv2.VideoWriter(output_video_path, fourcc, fps, (width,height))

frame_id = 0

# Capture frame-by-frame

# ret = 1 if the video is captured; frame is the image

ret, frame = vcap.read()

while ret:

df = detections

df = df[['yolov5.bboxes', 'yolov5.labels']][df.index == frame_id]

if df.size:

dfLst = df.values.tolist()

for bbox, label in zip(dfLst[0][0], dfLst[0][1]):

x1, y1, x2, y2 = bbox

x1, y1, x2, y2 = int(x1), int(y1), int(x2), int(y2)

# object bbox

frame=cv2.rectangle(frame, (x1, y1), (x2, y2), color1, thickness)

# object label

cv2.putText(frame, label, (x1, y1-10), cv2.FONT_HERSHEY_SIMPLEX, 0.9, color1, thickness)

# frame label

cv2.putText(frame, 'Frame ID: ' + str(frame_id), (700, 500), cv2.FONT_HERSHEY_SIMPLEX, 1.2, color2, thickness)

video.write(frame)

# Stop after twenty frames (id < 20 in previous query)

if frame_id == 20:

break

# Show every fifth frame

if frame_id % 5 == 0:

plt.imshow(frame)

plt.show()

frame_id+=1

ret, frame = vcap.read()

video.release()

vcap.release()

from ipywidgets import Video, Image

input_path = 'ua_detrac.mp4'

output_path = 'video.mp4'

dataframe = response.batch.frames

annotate_video(dataframe, input_path, output_path)

Video.from_file(output_path)

Dropping an User-Defined Function (UDF)#

cursor.execute("DROP UDF YoloV5;")

response = cursor.fetch_all()

print(response)

@status: ResponseStatus.SUCCESS

@batch:

0

0 UDF YoloV5 successfully dropped

@query_time: 0.017834065947681665