Object Detection Tutorial#

Run on Google Colab Run on Google Colab

|

View source on GitHub View source on GitHub

|

Download notebook Download notebook

|

Connect to EvaDB#

%pip install --quiet "evadb[vision,notebook]"

import evadb

cursor = evadb.connect().cursor()

Note: you may need to restart the kernel to use updated packages.

Download the Surveillance Videos#

In this tutorial, we focus on the UA-DETRAC benchmark. UA-DETRAC is a challenging real-world multi-object detection and multi-object tracking benchmark. The dataset consists of 10 hours of videos captured with a Cannon EOS 550D camera at 24 different locations at Beijing and Tianjin in China.

# Getting the video files

!wget -nc "https://www.dropbox.com/s/k00wge9exwkfxz6/ua_detrac.mp4?raw=1" -O ua_detrac.mp4

File ‘ua_detrac.mp4’ already there; not retrieving.

Load the videos for analysis#

We use a regular expression to load all the videos into the table in a single command

cursor.query("DROP TABLE IF EXISTS ObjectDetectionVideos;").df()

cursor.load(file_regex='ua_detrac.mp4', format="VIDEO", table_name='ObjectDetectionVideos').df()

| 0 | |

|---|---|

| 0 | Number of loaded VIDEO: 1 |

Register YOLO Object Detector as an User-Defined Function (UDF) in EvaDB#

cursor.query("""

CREATE UDF IF NOT EXISTS Yolo

TYPE ultralytics

'model' 'yolov8m.pt';

""").df()

| 0 | |

|---|---|

| 0 | UDF Yolo already exists, nothing added. |

Run the YOLO Object Detector on the video#

yolo_query = cursor.table("ObjectDetectionVideos")

yolo_query = yolo_query.filter("id < 20")

yolo_query = yolo_query.select("id, Yolo(data)")

response = yolo_query.df()

print(response)

objectdetectionvideos.id \

0 0

1 1

2 2

3 3

4 4

5 5

6 6

7 7

8 8

9 9

10 10

11 11

12 12

13 13

14 14

15 15

16 16

17 17

18 18

19 19

yolo.labels \

0 [car, car, car, car, car, car, person, car, ca...

1 [car, car, car, car, car, car, car, car, perso...

2 [car, car, car, person, car, car, car, car, ca...

3 [car, car, car, car, car, car, person, car, ca...

4 [car, car, car, car, car, car, person, car, ca...

5 [car, car, car, car, car, car, car, person, ca...

6 [car, car, car, car, person, car, car, car, ca...

7 [car, car, car, car, car, car, car, car, perso...

8 [car, car, car, car, car, car, car, car, perso...

9 [car, car, car, person, car, car, car, car, ca...

10 [car, car, person, car, car, car, car, car, ca...

11 [car, car, car, person, car, car, truck, car, ...

12 [car, car, person, car, car, car, car, car, ca...

13 [car, car, car, person, car, truck, car, car, ...

14 [car, car, car, car, person, car, car, car, ca...

15 [car, car, car, car, person, car, car, car, ca...

16 [car, car, car, person, car, car, car, car, ca...

17 [car, car, car, car, person, car, car, car, ca...

18 [car, car, person, car, car, car, car, car, ca...

19 [car, person, car, car, car, car, car, car, ca...

yolo.bboxes \

0 [[827.97412109375, 274.3556213378906, 959.1490...

1 [[155.66152954101562, 477.1302795410156, 275.7...

2 [[835.0940551757812, 276.74273681640625, 959.4...

3 [[839.3997802734375, 278.81060791015625, 959.3...

4 [[843.2724609375, 280.5651550292969, 959.40820...

5 [[847.2899780273438, 281.1034240722656, 959.29...

6 [[851.1822509765625, 282.2825927734375, 959.58...

7 [[854.6375732421875, 282.7655029296875, 959.54...

8 [[859.2888793945312, 283.25140380859375, 959.5...

9 [[863.5880126953125, 285.3915710449219, 959.52...

10 [[867.6785888671875, 286.24920654296875, 959.5...

11 [[871.6613159179688, 286.8082275390625, 959.45...

12 [[634.4969482421875, 223.8955535888672, 751.20...

13 [[880.8702392578125, 288.19842529296875, 959.5...

14 [[885.093017578125, 288.404052734375, 959.4323...

15 [[889.7506713867188, 290.65985107421875, 959.4...

16 [[894.7098999023438, 292.442138671875, 959.437...

17 [[899.2138671875, 293.09765625, 959.4815673828...

18 [[184.9110565185547, 388.7672119140625, 300.05...

19 [[649.16552734375, 228.12881469726562, 770.541...

yolo.scores

0 [0.76, 0.75, 0.74, 0.7, 0.67, 0.66, 0.61, 0.61...

1 [0.75, 0.74, 0.7, 0.68, 0.68, 0.66, 0.66, 0.59...

2 [0.84, 0.78, 0.71, 0.68, 0.67, 0.67, 0.66, 0.6...

3 [0.83, 0.77, 0.68, 0.67, 0.66, 0.66, 0.64, 0.6...

4 [0.8, 0.74, 0.73, 0.7, 0.68, 0.67, 0.65, 0.63,...

5 [0.83, 0.76, 0.75, 0.74, 0.67, 0.64, 0.62, 0.6...

6 [0.83, 0.77, 0.74, 0.71, 0.63, 0.63, 0.62, 0.6...

7 [0.82, 0.77, 0.67, 0.65, 0.65, 0.63, 0.62, 0.5...

8 [0.79, 0.78, 0.65, 0.63, 0.62, 0.62, 0.6, 0.59...

9 [0.83, 0.75, 0.66, 0.66, 0.65, 0.63, 0.61, 0.5...

10 [0.76, 0.74, 0.7, 0.67, 0.62, 0.6, 0.54, 0.53,...

11 [0.81, 0.74, 0.68, 0.66, 0.61, 0.57, 0.55, 0.5...

12 [0.8, 0.77, 0.61, 0.59, 0.58, 0.56, 0.55, 0.54...

13 [0.8, 0.76, 0.72, 0.69, 0.6, 0.6, 0.55, 0.55, ...

14 [0.83, 0.76, 0.75, 0.64, 0.64, 0.61, 0.61, 0.5...

15 [0.84, 0.77, 0.76, 0.69, 0.68, 0.63, 0.62, 0.6...

16 [0.81, 0.73, 0.69, 0.68, 0.68, 0.65, 0.6, 0.6,...

17 [0.76, 0.75, 0.68, 0.67, 0.66, 0.64, 0.61, 0.5...

18 [0.79, 0.76, 0.72, 0.67, 0.64, 0.58, 0.57, 0.5...

19 [0.78, 0.74, 0.74, 0.64, 0.61, 0.56, 0.55, 0.5...

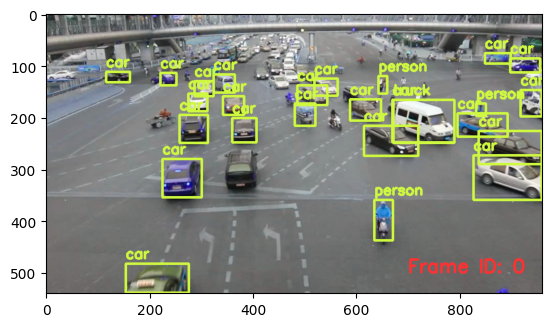

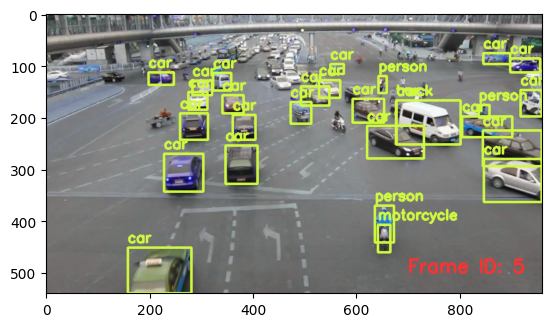

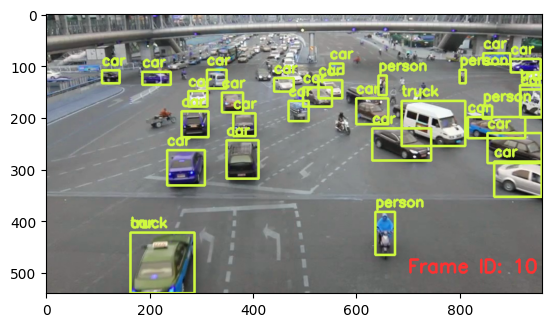

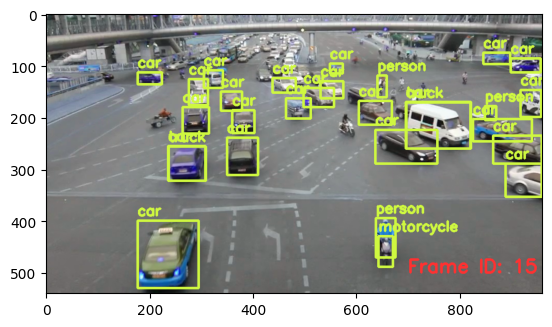

Visualizing output of the Object Detector on the video#

import cv2

from pprint import pprint

from matplotlib import pyplot as plt

def annotate_video(detections, input_video_path, output_video_path):

color1=(207, 248, 64)

color2=(255, 49, 49)

thickness=4

vcap = cv2.VideoCapture(input_video_path)

width = int(vcap.get(3))

height = int(vcap.get(4))

fps = vcap.get(5)

fourcc = cv2.VideoWriter_fourcc('m', 'p', '4', 'v') #codec

video=cv2.VideoWriter(output_video_path, fourcc, fps, (width,height))

frame_id = 0

# Capture frame-by-frame

# ret = 1 if the video is captured; frame is the image

ret, frame = vcap.read()

while ret:

df = detections

df = df[['yolo.bboxes', 'yolo.labels']][df.index == frame_id]

if df.size:

dfLst = df.values.tolist()

for bbox, label in zip(dfLst[0][0], dfLst[0][1]):

x1, y1, x2, y2 = bbox

x1, y1, x2, y2 = int(x1), int(y1), int(x2), int(y2)

# object bbox

frame=cv2.rectangle(frame, (x1, y1), (x2, y2), color1, thickness)

# object label

cv2.putText(frame, label, (x1, y1-10), cv2.FONT_HERSHEY_SIMPLEX, 0.9, color1, thickness)

# frame label

cv2.putText(frame, 'Frame ID: ' + str(frame_id), (700, 500), cv2.FONT_HERSHEY_SIMPLEX, 1.2, color2, thickness)

video.write(frame)

# Stop after twenty frames (id < 20 in previous query)

if frame_id == 20:

break

# Show every fifth frame

if frame_id % 5 == 0:

plt.imshow(frame)

plt.show()

frame_id+=1

ret, frame = vcap.read()

video.release()

vcap.release()

from ipywidgets import Video, Image

input_path = 'ua_detrac.mp4'

output_path = 'video.mp4'

annotate_video(response, input_path, output_path)

Video.from_file(output_path)

Drop the function if needed#

cursor.query("DROP UDF IF EXISTS Yolo").df()

| 0 | |

|---|---|

| 0 | UDF Yolo successfully dropped |